Welcome to Impact Factor, your weekly dose of commentary on a new medical study. I’m Dr F. Perry Wilson from the Yale School of Medicine.

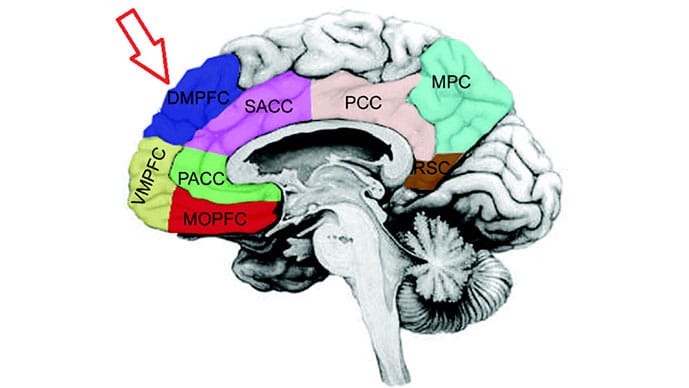

A new study finds that this area of the brain, the ventromedial prefrontal cortex, is the neurological seat of moral consistency. Put another way, this is the area of the brain that hypocrites lack.

It’s a totally fascinating study which challenges our notions of ethics and morality as transcendent features of consciousness and says no, they are neurological patterns, no different from the processes that let us walk or talk or add up numbers in our heads.

But before we go throwing all the politicians into an MRI scanner (I think I know what the results would show anyway), let’s dig into how scientists can actually test your moral compass.

The study, appearing in Cell Reports, employed functional MRI to monitor brain activity in 58 healthy right-handed adults as they engaged in a couple of tasks specifically designed to test their moral inconsistency — their hypocrisy, basically.

Let me walk you through the experimental setup. The participants were taught to play a simple game with a partner for real money. The participants could see a number on a card, but the partner could see only a blurry version. In front of both players, the researchers said that they would both be paid if the participant could get the partner to say the number accurately.

The researchers took each participant aside and told them a secret. If they got their partner to say a number higher than what was on the card, they would earn more money, but the partner would not. Participants could betray their partner for personal gain.

Into the MRI scanner they went, where they were presented with two options — an honest option, where they would win a small amount of money, and a deceptive option, where they would win more money. Over different rounds of this game, the experimenters could vary two factors — how big of a lie they were asking the participant to tell, and the reward for that deception.

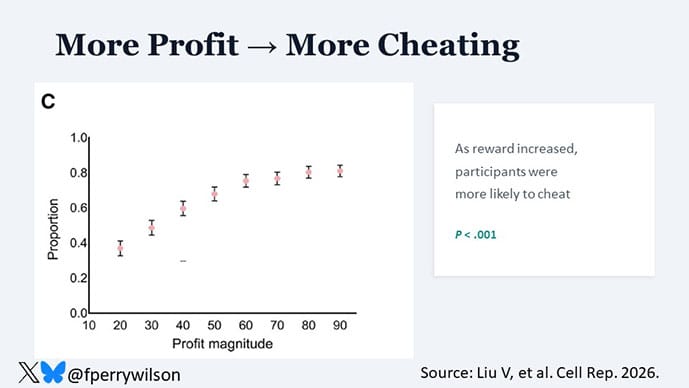

What you see here are the results of those tests. With more profit on the table, people were more likely to try to deceive their partner.

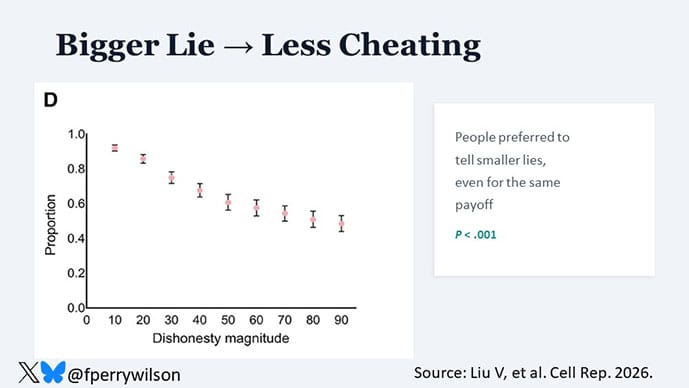

Of interest, the participants were less likely to deceive their partner when the dishonesty magnitude was higher. For example, if the number to guess was 35, they were more comfortable deceiving their partner into guessing 45 as opposed to 95, even though in the end it all amounted to the same thing. So, basically, we know that people are willing to lie for profit but prefer to tell smaller lies.

That’s not the crux of the study, however.

Researchers positioned participants so they could watch others undergoing the experiment. They became third-party observers to these deceptive tests. Each time they watched others deceive or not deceive their partner, the participant rated how immoral that behavior was.

We now have a measurement of an individual’s personal behavior in a situation where deception is on the table, as well as their judgment of others in the exact same situation.

So, what do you think happened? Were the participants more judgmental of others than of themselves?

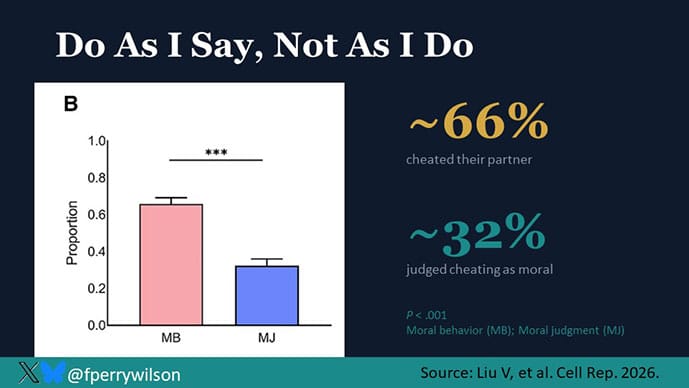

Of course they were. On average, individuals cheated their partner two thirds of the time. But they only judged others’ behavior as moral 35% of the time. Straight up “do as I say not as I do” stuff.

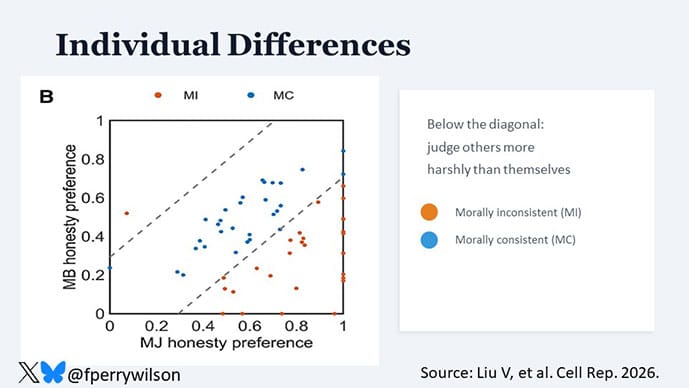

The individual-level data are fascinating. Here you see a scatterplot of people’s personal behavior vs judgment of others in the bottom right triangle are people who judge others more harshly than themselves. In the upper left, you see the lone individual who gave more slack to others than they exhibited on their own.

OK but a cool social psychology experiment is just the beginning. Remember, these people were all in functional MRI scanners while they were deceiving, so we can see what is happening in their brains as this is all going on. And that’s critical, because you may be wondering, as I was reading this study, what are the people thinking when they are being deceptive? Do they know they are being immoral, or do they ignore that in favor of the profit? The brain scans show the reality of the situation.

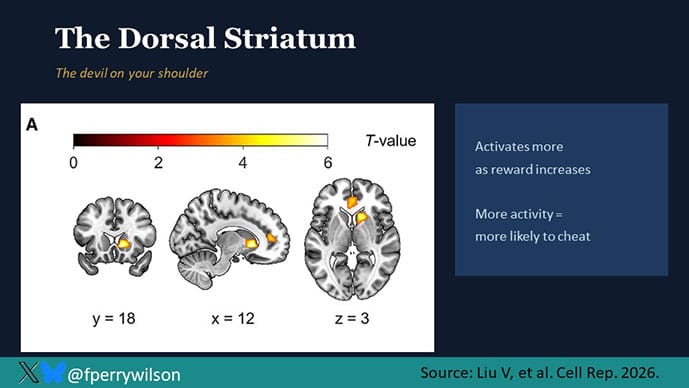

To make sense of this, we need to create a mini map of this area of the brain. This is the dorsal striatum, previously shown to be important in motor control but also decision-making.

This brain region responded more strongly as the reward for dishonesty increased. And in fact, the more active this area was, the more likely someone was to cheat. We’ll shorthand this part of the brain as the base desire. It wants that money.

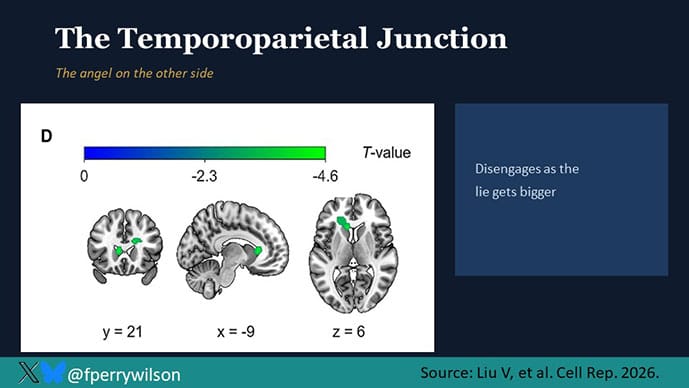

Then we have the temporal parietal junction and inferior parietal lobule.

These regions lit up more when the dishonesty magnitude was smaller. Essentially, this is the part of the brain that is socially conscious — worried about how we might be perceived by others. It’s happier when we are lying less. If the dorsal striatum is the devil on your shoulder, the TPJ and IPL are the angels on the other side.

But remember, this study isn’t about whether people are moral or immoral; it’s about whether they are morally consistent. We know that the average person will behave in a way that they would call immoral when observing it in someone else. Does that work because their brain forgets the rules of morality when they are personally in the situation? The MRI says no. The knowledge of what is moral in this situation appeared to be the dorsomedial prefrontal cortex.

This section lit up when individuals were judging others as immoral and when they were acting immorally themselves. In other words, people knew they were doing something wrong; they did it anyway.

So, we have the devil telling you to take the money (or appreciating that other people take money). We have the angel telling you taking the money is wrong (and judging others for doing the same). We have the rulebook that remains unchanged across both scenarios, so why are some people more morally consistent than others?

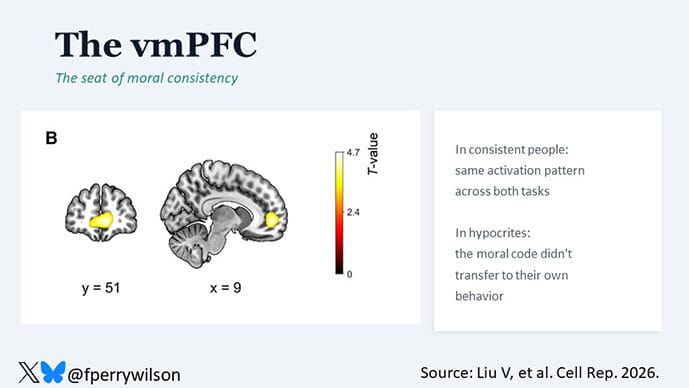

The researchers pinpoint this to the ventromedial prefrontal cortex, that part of the brain I showed you earlier.

Those who were highly morally consistent showed high activity here, connected to these other important regions. Those who were morally inconsistent showed less connectivity here, particularly when it was the individual themselves behaving immorally compared to when they were judging others. To me, this represents the self-reflection center of the brain. People know the rules, and can apply them, but just don’t think about it when they are the ones breaking the rules.

I know, this sounds a bit like science fiction. And I always worry that correlation isn’t causation in situations like this.

That’s why it’s so cool that the researchers went one step further.

If the ventromedial prefrontal cortex is the seat of moral consistency, disrupting it would make people less morally consistent.

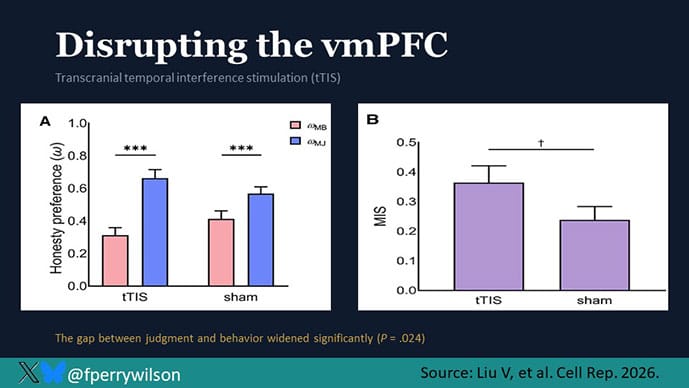

To test this, the researchers used a technique called transcranial temporal interference stimulation to briefly disrupt the vmPFC and ran a group of 52 subjects through the same experiment. They became more judgy of others and even less judgy of themselves. The “moral inconsistency score” rose significantly. This literally made people more hypocritical.

The brain is just endlessly fascinating, and I don’t think studies like this take away any of the mystery. That behaviors and traits as complex as hypocrisy have a neurological correlate may seem wild, but of course, what is the other option? We are, fundamentally, our brains. And if our brains change, who we are changes as well. We have found nothing else that makes us… us.

I am left trying to figure out what to do with this information. Should we be less judgmental of hypocrites? Should we forgive our politicians their trespasses and blame their behavior on a woefully disconnected ventromedial prefrontal cortex? Or should we use this to remind ourselves that all of us have a tendency to judge others more harshly than we do ourselves and to work harder to extend the grace we reserve for our own actions to others. It’s a tall order. But the brain is nothing if not adaptable. And if the first step to practicing what you preach is knowing why you don’t, well, consider yourself informed.

F. Perry Wilson, MD, MSCE, is an associate professor of medicine and public health and director of Yale’s Clinical and Translational Research Accelerator.

https://www.medscape.com/viewarticle/rules-thee-not-me-neuroscience-double-standard-2026a100086q

No comments:

Post a Comment

Note: Only a member of this blog may post a comment.